Why test design with real people

Good design is not just about producing nice-looking pictures, it’s about solving a problem. And the only way to know if you succeeded at that is to put it to work — test it. Proper usability testing, focus groups or design sprints are expensive and sometimes take up the whole team, so what’s left?

Two ways of testing without spending hard-earned cash

- Usability heuristics (detailed info here). It’s a method that involves a designer to judge the interface using commonly accepted usability principles (the “heuristics”) but testing results are always biased because they come from yourself.

- Usability testing with colleagues and friends — that’s what we’ll discuss.

critical web design mistakes to avoid

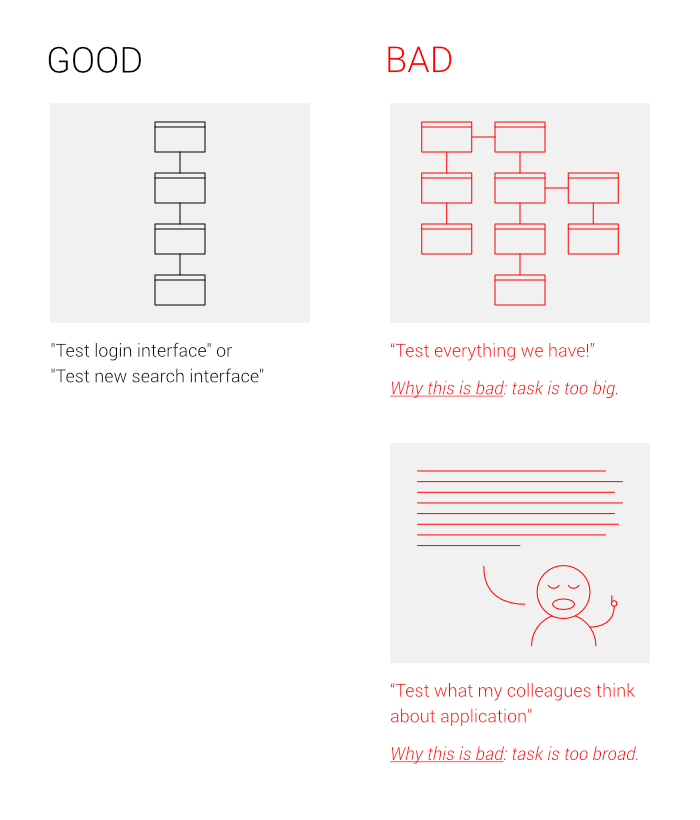

Step 1. Determine the subject of testing.

Try to test the small and simple part of your application, otherwise, it’ll take up a lot of time and decrease your tester’s motivation.

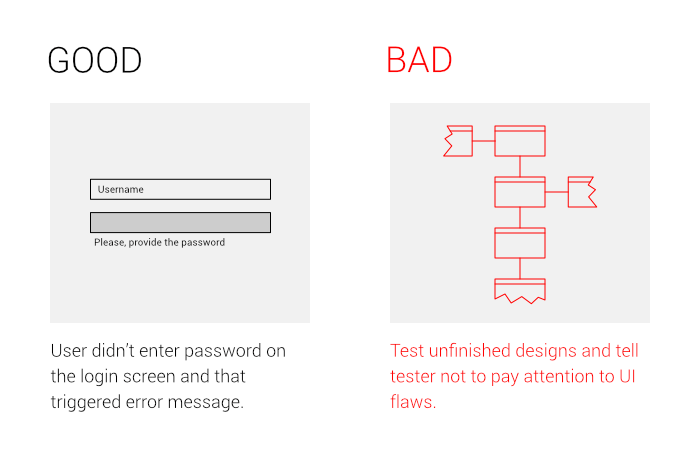

Step 2. Create a flow of screens that covers the main use case and anticipates user’s errors or misclicks with appropriate feedback.

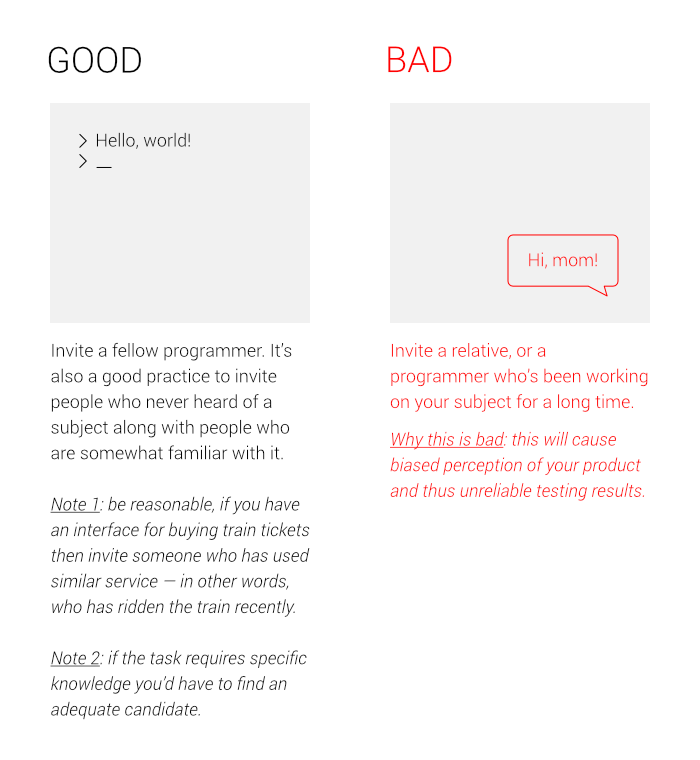

Step 3. Find testers for your design.

A good practice is to test the interface with people that have knowledge relevant to your product.

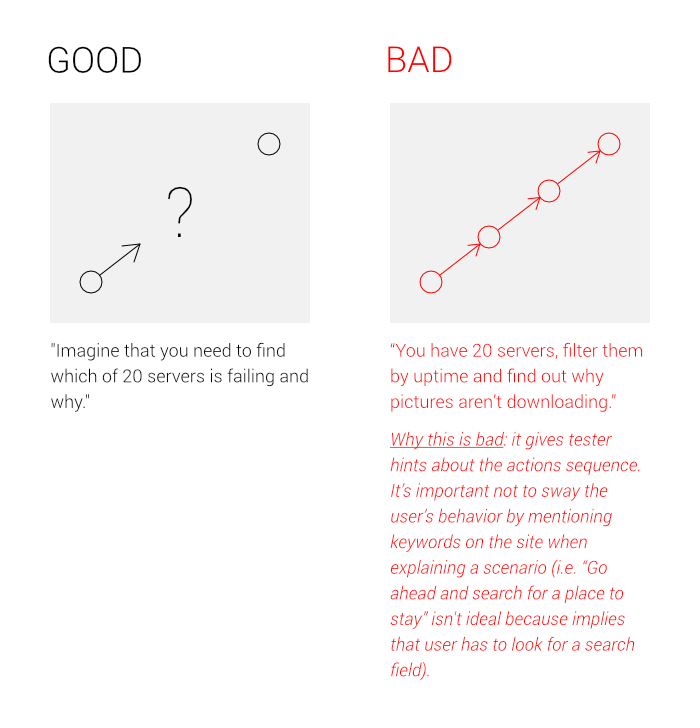

Step 4. Set proper goals for testers

Step 5. The process of testing

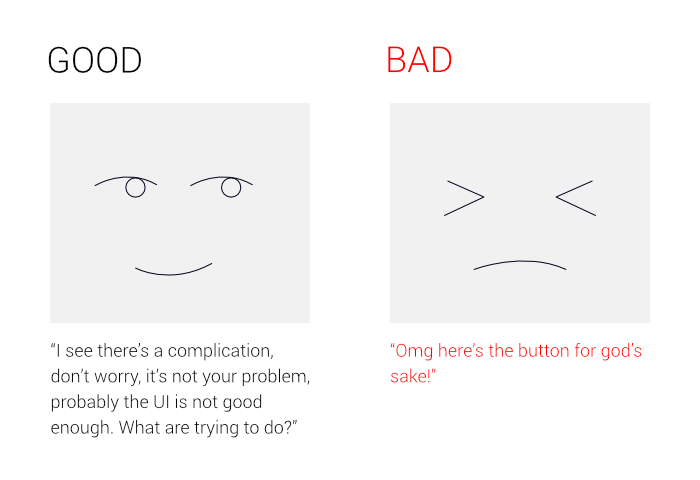

a) Don’t give tester any hints or influence their decision in any way.

If your tester is stuck or thinking too long, ask what do they think about, but never prompt the next step, otherwise the result will be biased.

Some people might feel nervous if they think it’s taking too long to complete the task, you should calm them telling that it’s okay to think and that even if you can’t complete the task it’s not your fault, it’s designer’s who has done a bad job.

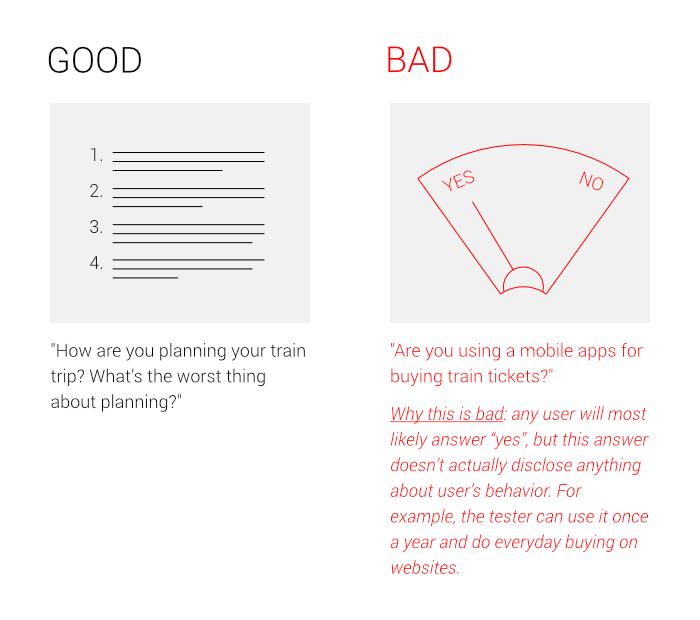

b) Don’t ask leading questions or questions that could be answered “yes” or “no”

Good: how are you planning your train trip? What’s the worst thing about planning?

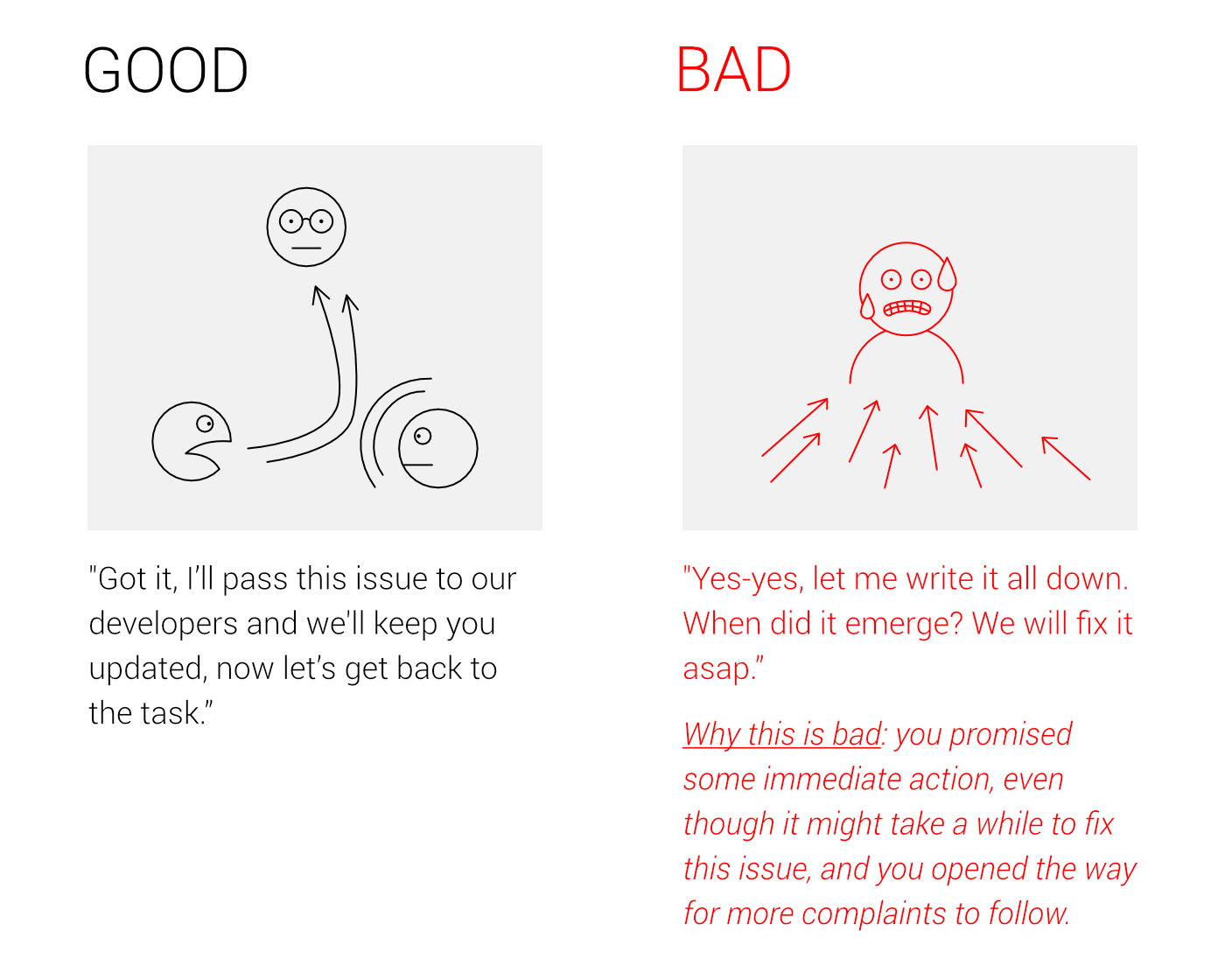

c) Hold down user’s complaints if they’re going out of hand

It’s fine to note a few concerns, but it’s much worse when it’s going way out of control. Don’t waste your time, give user contacts of a support team or a product owner and continue with the test course.

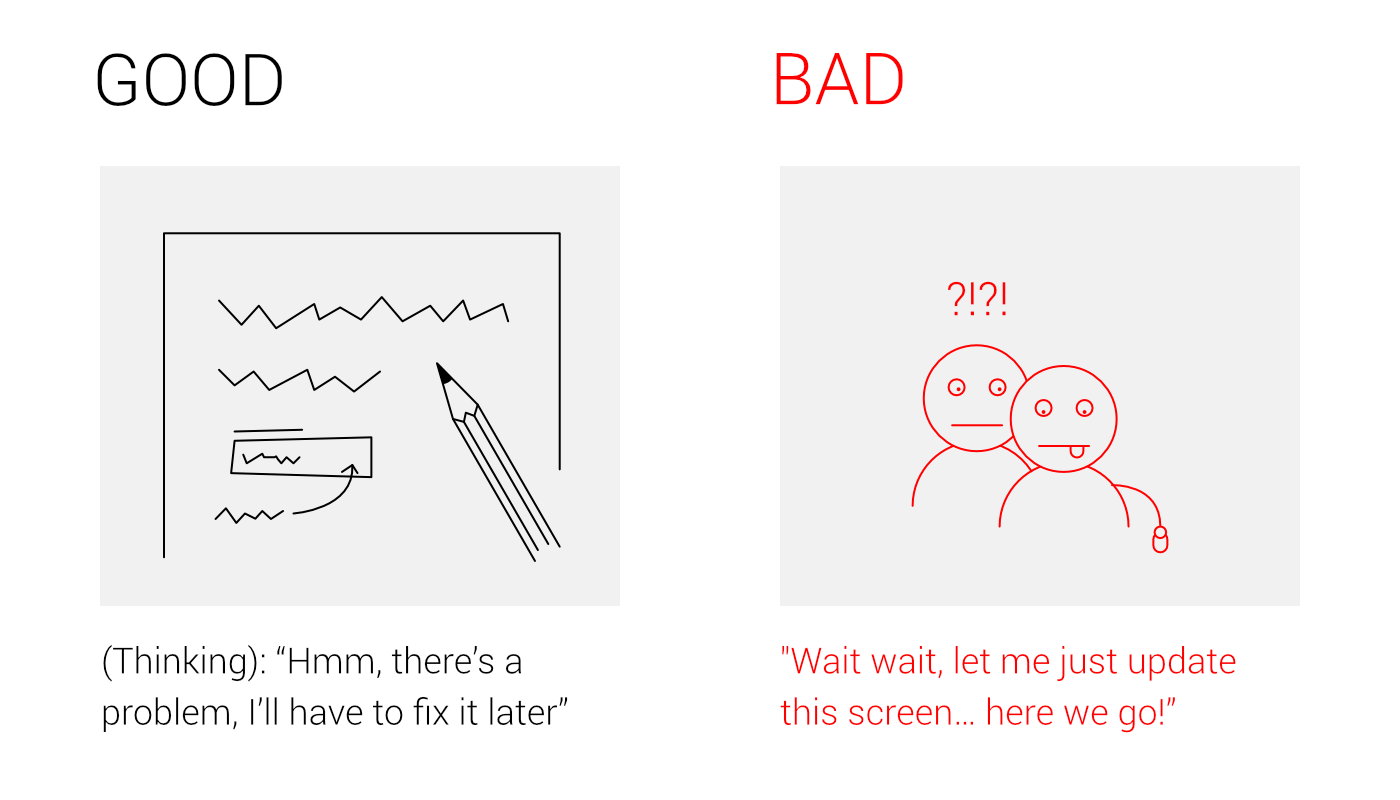

d) Never try to solve problems during testing. Never.

If something pops in your mind just wait until the end of the interview. In the worst case, you’ll distract your tester and get a biased feedback. Plus, hotfixing something on a spot isn’t exactly the most thorough way to test ideas and solve problems, so you’re likely to make mistakes.

Step 6. After the testing

As Kim Goodwin says, using raw testing data is the same as eating the cake before baking it.

Separate those types of data and put it into groups:

- Complications — spots where the user had problems understanding how the product works.

- Questions — spots where the user had insufficient information.

- Appreciations — spots that worked for user really well.

Sort it by how frequently they appeared with the most frequent on top.

Step 7. Go on and improve the design

Fix everything that needs to be improved and drop this marvel on the Product Owner.

Also, subscribe to hear about legendary business pivots on our CTRL SHIFT podcast.